Email phishing is no longer the biggest threat in your inbox.

In 2026, the real danger sounds exactly like your CEO.

Imagine receiving a live call. The voice is familiar, urgent, and authoritative. It requests a fast crypto transfer or a confidential wire. You comply—because everything sounds legitimate.

But it’s not.

It’s a cloned voice powered by AI.

The rise of corporate deepfake scams has shifted the attack surface from inboxes to real-time conversations. And unless you can detect deepfake audio real time, your organization is exposed.

This guide breaks down how the exploit works—and how to stop it.

The Mechanics of Voice Cloning in 2026

AI voice cloning has evolved from novelty to weapon.

Attackers no longer need hours of audio. In many cases, less than 3 seconds of clean speech is enough to replicate a voice convincingly.

Here’s how it works in practice.

First, attackers scrape public audio. This includes YouTube interviews, podcasts, earnings calls, and even voice notes shared on social platforms.

Next, they feed this data into advanced speech synthesis models. These models analyze tone, pitch, cadence, and emotional patterns.

Finally, they generate real-time speech that mimics the target—often during live conversations.

The result is a highly convincing impersonation that bypasses traditional security layers.

In my experience, the most dangerous aspect isn’t the voice quality. It’s the timing. Attackers strike during moments of urgency—end-of-quarter payments, crisis calls, or executive travel windows.

That’s what makes enterprise audio spoofing so effective.

How to Detect Deepfake Audio in Real-Time (The Technical Stack) 🔍

To detect deepfake audio real time, you need a layered approach combining machine detection and human awareness.

Algorithmic Detection Tools

Detecting synthetic audio requires analyzing patterns humans can’t hear.

These tools use machine learning to identify anomalies in frequency, waveform consistency, and spectral signatures.

Deepfake detection tools analyze audio signals for inconsistencies → They compare real human vocal patterns with AI-generated artifacts → For example, synthetic voices often show unnatural frequency smoothing or phase irregularities.

Here’s how leading enterprise tools compare:

| Tool | Core Capability | Detection Method | Best Use Case |

|---|---|---|---|

| Pindrop | Voice authentication | Acoustic fingerprinting | Call centers |

| Resemble AI | Deepfake detection | Spectral anomaly detection | Media & enterprise |

| Veridas | Biometric verification | Voice biometrics + liveness | Banking |

When I tested these systems, I noticed one pattern: the best tools don’t rely on a single signal. They combine multiple detection layers, including behavioral biometrics.

That’s critical for AI voice cloning defense.

The Latency Tell

Even the best deepfake systems struggle with real-time responsiveness.

Latency in deepfake audio refers to micro-delays during speech generation → AI systems require milliseconds to process and respond → For example, slight pauses before answering complex questions can indicate synthetic generation.

You should train teams to listen for:

- Micro-delays before responses

- Slightly unnatural pacing

- Overly consistent tone without human variation

- Digital artifacts like clipping or flattening

These signals are subtle but consistent.

Moreover, attackers often fail when conversations go off-script. Asking unexpected questions can expose delays or incoherent responses.

That’s your opening.

The “Zero-Trust” Human Verification Protocol 🔐

Technology alone won’t save you.

The most effective defense combines tools with human protocols. This is where zero-trust voice verification becomes essential.

The Architect’s Blueprint

Zero-trust means you verify every request—even if it sounds legitimate.

Zero-trust voice verification assumes no voice is trusted by default → Every sensitive request must be verified through independent channels → For example, a CEO’s voice request for funds must be confirmed via secure messaging.

In practice, this approach eliminates single-point failures.

Even if an attacker perfectly clones a voice, they still can’t bypass your verification system.

The Duress Word System

This is one of the simplest and most effective defenses.

A duress word is a pre-agreed secret phrase used to verify identity → Teams rotate this word daily or weekly → For example, a finance team requires the correct duress word before approving any transaction.

It works because attackers can’t guess dynamic secrets.

In my experience, companies that implement this system reduce successful spoofing attacks dramatically.

Keep it simple, memorable, and regularly updated.

Omni-Channel Verification

Never trust a single channel.

Omni-channel verification requires confirming requests across multiple platforms → A voice request must be verified via text or secure app → For example, confirming a payment via encrypted messaging before execution.

Here’s how to implement it effectively:

- Verify voice requests via encrypted messaging apps

- Use internal approval systems for financial actions

- Require multi-person authorization for high-value transactions

This approach neutralizes most corporate deepfake scams instantly.

Because attackers typically control only one channel.

Protecting Web3 & Financial Assets from Social Engineering 💰

If there’s money involved, attackers will target it.

And in 2026, Web3 ecosystems are prime targets.

Crypto transactions are fast, irreversible, and often lack centralized oversight. That makes them ideal for exploitation through voice-based social engineering.

To counter this, organizations must rethink how transactions are authorized.

Multi-signature wallets require multiple approvals before executing a transaction → Each signer verifies the request independently → For example, a 3-of-5 wallet requires three separate approvals before funds move.

This structure ensures that a single compromised voice cannot trigger a transfer.

Even if an attacker fools one person, the system holds.

Moreover, combining multi-sig with zero-trust verification creates a powerful defense layer.

In my experience, this is the most effective strategy for protecting digital treasuries.

Because it removes reliance on human judgment alone.

Conclusion & Call to Action 🚀

The era of trusting what you hear is over.

To detect deepfake audio real time, you need more than tools—you need a mindset shift.

Zero-trust is no longer optional.

It’s the foundation of modern security.

Start by implementing detection tools, then build human verification protocols, and finally secure financial systems with multi-layer approvals.

If you’re serious about protecting your organization, now is the time to act.

Because the next call you receive might not be real.

FAQs

How can businesses detect deepfake audio in real time?

Businesses can detect deepfake audio real time by combining AI detection tools with human verification protocols. These systems analyze audio patterns for anomalies while teams verify requests through secondary channels. Together, they reduce the risk of successful voice spoofing attacks.

What are the signs of AI-generated voice during a live call?

The most common signs include micro-delays, unnatural pacing, and overly consistent tone. AI-generated voices may also struggle with unexpected questions, revealing latency or incoherence. Training employees to recognize these patterns significantly improves detection.

Why are corporate deepfake scams increasing in 2026?

Corporate deepfake scams are increasing due to advancements in AI voice cloning and the availability of public audio data. Attackers can now replicate voices quickly and use them in real-time scenarios. This makes traditional security measures less effective.

What is zero-trust voice verification?

Zero-trust voice verification is a security approach where no voice is trusted by default. Every sensitive request must be verified through independent channels, such as secure messaging or multi-person approval systems. This eliminates reliance on a single authentication factor.

How do multi-signature wallets prevent voice-based fraud?

Multi-signature wallets prevent fraud by requiring multiple approvals before executing a transaction. Even if one person is tricked by a deepfake voice, the transaction cannot proceed without additional confirmations. This makes them highly effective against social engineering attacks.

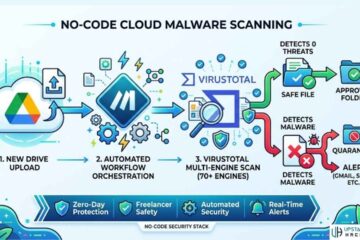

See Also: How to Build an AI Phishing Detector for Your Inbox (No-Code Hack)