🔄 Last Updated: April 19, 2026

Founder & AI Automation Specialist · Upstanding Hackers

Rana Junaid Shahid is a technology specialist and founder of Upstanding Hackers with over 5 years of hands-on experience in AI automation, no-code workflows, and digital infrastructure. He has built and deployed AI-driven pipelines using tools like Make.com, OpenAI, and no-code AI automation for businesses across multiple industries. His work focuses on making complex emerging technologies practical and accessible — without requiring a developer background. Junaid covers AI agents for business, automation strategy, digital marketing technology, and Web3 infrastructure.

Why AI and Machine Learning Matter More Than Ever in 2026

AI and machine learning are no longer emerging technologies. They are the infrastructure of the modern digital world.

In 2026, global AI spending is projected to reach $2.02 trillion. The machine learning market alone is forecast to grow toward $1.88 trillion by 2035. Meanwhile, 78% of organizations now use AI in at least one core business function, according to McKinsey. These numbers reflect something profound — AI and machine learning have quietly moved from experimental lab projects into the beating heart of how businesses operate, compete, and survive.

Furthermore, the pace of change is accelerating. In 2025 alone, Epoch AI tracked 87 notable AI model releases from industry organizations — compared to just seven from academic or government sources. The private sector is now the primary driver of AI innovation, and the consequences of that shift are touching every industry on the planet.

At Upstanding Hackers, our mission is to translate complex technology into knowledge that real people can actually use. This guide covers everything you need to understand about AI and machine learning in 2026 — what they are, how they differ, where they are being applied, what risks they carry, and what the future looks like.

What Is Artificial Intelligence? A Clear, Simple Definition

Artificial intelligence refers to computer systems that perform tasks normally requiring human intelligence. These tasks include understanding language, recognizing images, making decisions, solving problems, and learning from experience.

AI is the broad umbrella. Under it sit several related disciplines — machine learning, deep learning, natural language processing, computer vision, and robotics. Each represents a different approach to building intelligent systems.

It is important to understand that AI does not mean a single technology. It is a collection of methods, tools, and frameworks working together. When your email filters spam, a recommendation engine suggests a Netflix show, or a fraud detection system flags a suspicious transaction — that is AI at work.

For a deeper exploration of how AI compares to human intelligence, our comprehensive piece on artificial intelligence vs. humans — who is better? breaks down the nuances honestly.

What Is Machine Learning? The Engine Inside AI

Machine learning (ML) is a subset of AI that enables systems to learn from data without being explicitly programmed. Instead of following fixed rules, a machine learning model analyzes patterns in data and improves its accuracy over time through experience.

Think of it this way. A traditional program follows instructions: “if the email contains the word ‘lottery’, mark it as spam.” A machine learning model instead analyzes thousands of spam and non-spam emails, learns the subtle patterns that distinguish them, and builds its own detection logic — one that becomes sharper with every new example it sees.

This distinction matters enormously. Machine learning is what gives AI systems their adaptability. It is what allows them to handle situations their creators never explicitly anticipated.

For a thorough comparison of these two closely related concepts, our guide on the difference between artificial intelligence and machine learning is an excellent starting point.

AI vs. Machine Learning vs. Deep Learning: The Key Differences

These three terms are frequently used interchangeably, but they are not the same. Understanding the relationship between them is foundational.

Artificial Intelligence is the overarching concept — any system that mimics human cognitive functions.

Machine Learning is a specific approach to building AI — using data and algorithms to enable systems to learn without explicit programming.

Deep Learning is a subset of machine learning that uses neural networks with many layers to process complex, unstructured data like images, audio, and natural language. Deep learning is what powers voice assistants, image recognition, and most modern large language models (LLMs).

The relationship is nested: all deep learning is machine learning, and all machine learning is AI. However, not all AI uses machine learning, and not all machine learning uses deep learning.

In 2026, deep learning — particularly transformer-based models — dominates headline AI capabilities. However, traditional machine learning methods remain widely used for structured data tasks like fraud detection, risk scoring, and predictive maintenance.

How Machine Learning Actually Works: The Core Types

Understanding how machine learning works requires knowing its three primary training paradigms.

Supervised Learning

In supervised learning, the model learns from labeled data. You show it thousands of examples — each tagged with the correct answer — and it learns to predict answers for new, unseen inputs. Most commercial AI applications use supervised learning.

For example, a spam detector trained on millions of labeled emails — “spam” or “not spam” — learns to classify new messages accurately. Similarly, fraud detection models in banking learn from historical transaction data labeled as fraudulent or legitimate.

Unsupervised Learning

Unsupervised learning works without labels. The model finds patterns and structures in data on its own. Clustering algorithms, anomaly detection systems, and recommendation engines frequently use unsupervised approaches.

For instance, an e-commerce platform might use unsupervised learning to group customers into behavioral segments — without pre-defining what those segments should look like.

Reinforcement Learning

Reinforcement learning trains models through trial, error, and reward. The model takes actions, receives feedback on whether those actions were good or bad, and adjusts its strategy accordingly. This is the technique behind game-playing AI systems and increasingly, autonomous agents.

Reinforcement learning is particularly relevant in 2026 as it underpins the development of agentic AI — systems that can plan, act, and learn from outcomes in real-world environments.

The Biggest AI and Machine Learning Trends in 2026

1. Agentic AI: From Answering to Acting

The most significant shift in AI and machine learning in 2026 is the rise of agentic AI. Traditional AI systems respond to questions. Agentic systems take action.

An AI agent can move through multi-step workflows, gather information from multiple systems, make contextual decisions, and execute tasks with minimal human input. Gartner predicts that 40% of enterprise applications will embed AI agents by the end of 2026 — up from less than 5% in 2025.

The market reflects this momentum. The agentic AI market is projected to surge from $7.8 billion today to over $52 billion by 2030. However, it is important to approach this trend with realistic expectations. Various experiments — including research from Anthropic and Carnegie Mellon — have found that current AI agents still make too many mistakes for processes involving high-stakes decisions. Moreover, agentic systems introduce new cybersecurity vulnerabilities, particularly prompt injection attacks.

Our guide on low-cost AI agents for small business workflows shows how businesses can begin implementing these systems practically and safely today.

2. Smaller, Specialized Models Are Replacing Giant Generalist Models

For several years, the dominant narrative was “bigger is better.” Larger models with more parameters consistently outperformed smaller ones. In 2026, that assumption is being challenged.

IBM’s Director of Open Source AI, Anthony Annunziata, explains it clearly: “Instead of one giant model for everything, you’ll have smaller, more efficient models that are just as accurate — maybe more so — when tuned for the right use case.”

This trend has enormous practical implications. Smaller, domain-specific models are cheaper to run, easier to fine-tune, more secure, and faster to deploy. They also require less infrastructure, making enterprise AI more accessible to organizations that cannot afford to operate massive cloud AI workloads.

3. Multimodal AI: Understanding Text, Images, Audio, and Video Together

Multimodal AI systems can process and generate multiple types of data simultaneously. Modern LLMs now handle text, images, audio, and increasingly video within a single model architecture.

This represents a fundamental capability expansion. For instance, a multimodal AI system can analyze a photograph of a product defect, read the associated maintenance log, and recommend a repair procedure — all as part of a single integrated workflow. Consequently, multimodal AI is accelerating adoption in healthcare, manufacturing, retail, and content creation.

4. Edge AI: Intelligence Without Internet Dependency

Edge AI brings machine learning inference directly to devices — smartphones, wearables, autonomous vehicles, industrial sensors — rather than sending data to remote cloud servers. This reduces latency, enhances privacy, and enables real-time AI performance without network dependency.

New AI-enabled chips like the Hailo-10H edge AI accelerator and the NXP i.MX 95 processor family are embedding intelligence into endpoints that previously had no processing capability whatsoever. Similarly, TinyML — a field focused on building small, highly optimized ML models — is making edge AI possible even on ultra-low-power devices.

5. AI Governance and Responsible AI Are No Longer Optional

Perhaps the most important non-technical trend in AI and machine learning is the maturation of governance frameworks. Organizations can no longer deploy AI without formal oversight structures.

According to recent research, only 37% of organizations that run generative AI have a formal AI policy. This gap is dangerous. Poorly governed AI introduces risks including bias, misconfiguration, data exposure, and regulatory liability. As a result, responsible AI — which encompasses fairness, transparency, explainability, and human oversight — is becoming a baseline design requirement rather than an optional enhancement.

The World Economic Forum’s Global Cybersecurity Outlook 2026 identified 87% of respondents flagging AI-related vulnerabilities as the fastest-growing category of cyber risk. This directly connects AI governance to the broader cybersecurity agenda explored in our AI in cybersecurity guide.

6. Generative AI Becomes Invisible Infrastructure

Generative AI is moving from a visible, headline-grabbing feature to invisible background infrastructure. In 2026, generative AI is no longer treated as an add-on — it is embedded directly into workflows, productivity tools, development environments, and business applications.

This “invisible AI” shift means that most users will interact with generative AI daily without consciously thinking about it. Furthermore, it means the ability to govern, audit, and understand AI systems is becoming more critical — because the systems are less visible, not more.

Real-World Applications of AI and Machine Learning by Industry

Healthcare

In healthcare, AI and machine learning are transforming diagnostics, drug discovery, patient monitoring, and clinical workflow automation. Approximately 65–70% of healthcare organizations now use these technologies to improve patient diagnostics and analyze medical data.

AI models can detect cancerous lesions in medical imaging with accuracy comparable to specialist physicians. Machine learning systems analyze electronic health records to predict which patients are at high risk of deterioration. Additionally, drug discovery pipelines that once took a decade now benefit from AI-accelerated molecular screening.

Financial Services

The financial sector leads AI adoption for fraud detection and risk management, with 70–75% of financial companies deploying machine learning for these purposes. AI systems analyze millions of transactions per second, flagging anomalies in real time.

Additionally, AI-powered credit scoring, algorithmic trading, personalized investment advice, and regulatory compliance automation have fundamentally changed how financial institutions operate. Understanding how AI intersects with digital finance is also central to our cryptocurrency and blockchain coverage.

Retail and E-Commerce

The retail sector uses machine learning for personalization, demand forecasting, pricing optimization, and supply chain management. Globally, retail businesses spend $18.7 billion annually on ML-powered trend prediction and supply chain management.

Amazon’s recommendation engine is the most famous example, but machine learning now powers everything from dynamic pricing to inventory management to customer churn prediction.

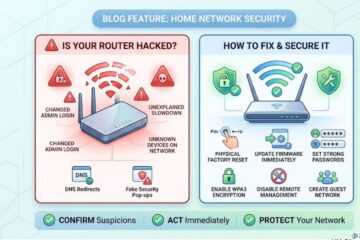

Cybersecurity

AI and machine learning are perhaps most critically important in cybersecurity. ML models detect anomalies, identify zero-day threats, automate incident response, and power the threat intelligence platforms that modern security teams depend on.

Our dedicated guide on AI in cybersecurity covers this application in depth, including both defensive applications and how attackers are weaponizing AI. Additionally, our threat intelligence guide explains how machine learning transforms raw data into actionable security intelligence.

Marketing and Digital Business

Marketing was one of the earliest enterprise adopters of machine learning. Approximately 49% of organizations now apply ML and AI to marketing and sales operations. Applications include customer segmentation, content personalization, predictive lead scoring, churn prevention, and campaign optimization.

Our content marketing tools guide and PPC for small businesses article both reflect how AI is reshaping digital marketing strategy for businesses of every size. Moreover, the emergence of Generative Engine Optimization (GEO) demonstrates how even SEO is being transformed by large language models.

Key Statistics: AI and Machine Learning in 2026

These figures come from the most recent industry research and paint a clear picture of the current landscape:

- $2.02 trillion — projected global AI spending in 2026

- $279 billion — global AI market size in 2024, projected to reach $3.5 trillion by 2033 (Grand View Research)

- 78% of organizations use AI in at least one business function (McKinsey)

- 91.5% of leading companies invest in ML or AI capabilities each year

- 85% of enterprises in the United States use ML and AI technologies in 2026

- 82% of businesses actively seek employees with machine learning expertise

- 73% of company leaders believe ML can potentially double employee productivity

- 80% of companies report that investing in ML tools increases earnings

- 48% of businesses use some form of ML or AI — but only 8% fully leverage advanced ML, deep learning, and NLP

- 63,272 companies globally now use data science and ML tools

- 2.5 million ML and AI specialists in the global workforce, with 219,000 new positions added in the past year alone

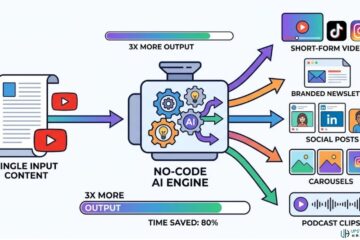

AI and Machine Learning in No-Code Environments

One of the most democratizing trends in AI and machine learning is the rise of no-code and low-code platforms. These tools allow people with no programming background to build, deploy, and benefit from AI systems.

Platforms like Make.com and Zapier now provide visual interfaces for building AI-powered automation workflows. OpenAI’s API is accessible through these platforms without writing a single line of code. Consequently, the barrier to building useful AI applications has dropped dramatically.

At Upstanding Hackers, we have invested heavily in documenting these no-code approaches. Our tutorials cover:

- Building an AI phishing detector for your inbox without code

- Automating malware scanning using Make.com and VirusTotal

- Building a WhatsApp scam detector with OpenAI and Make.com

- Low-cost AI agents for small business workflows

Each guide is built on the same philosophy: you should not need a computer science degree to benefit from machine learning.

The Risks and Challenges of AI and Machine Learning

Bias and Fairness

Machine learning models learn from historical data. If that data reflects historical biases — in hiring, lending, law enforcement, or healthcare — the model will reproduce and sometimes amplify those biases. Addressing algorithmic fairness is one of the most important active research areas in the field.

Explainability and the “Black Box” Problem

Many deep learning models, while highly accurate, cannot easily explain how they reached a specific decision. This “black box” problem creates challenges in regulated industries where decisions must be explainable and auditable. Explainable AI (XAI) is a growing discipline specifically addressing this limitation.

Data Privacy and Security

AI models require enormous quantities of data for training. This creates significant privacy considerations — particularly when that data includes sensitive personal information. Furthermore, trained models can sometimes be reverse-engineered to extract private data from their training sets, a vulnerability known as model inversion.

For practical privacy protection strategies, our guide on how to stop AI from reading your Gmail provides actionable steps anyone can take immediately.

The Skills Gap

Despite record adoption, the AI skills gap remains one of the most critical constraints on progress. 82% of businesses are actively seeking employees with machine learning expertise. Meanwhile, ISACA reports that the most critical skills gaps in new graduates are not technical — they are critical thinking, communication, and problem-solving.

This underscores an important truth. AI and machine learning are not purely technical disciplines. They require judgment, ethics, domain knowledge, and human oversight to deploy responsibly and effectively.

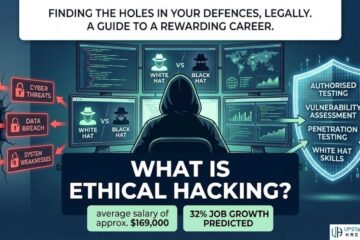

AI and Machine Learning Careers: What the Landscape Looks Like

In-Demand Roles

The AI and machine learning job market is expanding at a remarkable pace. The US Bureau of Labor Statistics projects 32% job growth in related information technology roles through 2032. In-demand roles include:

- Machine Learning Engineer

- Data Scientist

- AI/ML Researcher

- MLOps Engineer

- AI Governance Specialist

- LLM Security Engineer

- Prompt Engineer

Notably, roles focused on governance, security, and responsible AI are among the fastest-growing. This reflects the industry’s maturation — technical capability is necessary but no longer sufficient on its own.

How to Start Learning

If you are new to AI and machine learning, the journey does not need to be overwhelming. Our comprehensive guide on how a beginner can start learning AI in 2026 provides a clear, structured learning roadmap.

Similarly, our piece on AI augmenting humans frames the human-AI collaboration model that defines modern work — helping you understand where human skills remain irreplaceable alongside machine capabilities.

For those interested in the technical side of computing and security as a career foundation, our technical skills guide for IT freshers and career prospects after B.Tech in computer science provide strong starting points.

The Ethical Dimension: Is AI Good for Society?

This question is no longer purely academic. As AI and machine learning permeate healthcare, criminal justice, education, financial services, and public governance, the ethical implications are tangible and urgent.

Our dedicated long-form piece on whether artificial intelligence is good for society presents an honest, evidence-based exploration of both sides of this debate. As AI systems increasingly shape life outcomes — who gets a loan, who gets a job interview, who gets flagged by a security system — building AI that is fair, transparent, and accountable is not optional. It is a moral imperative.

The challenge, consequently, is not whether to use AI and machine learning. They are already woven into the fabric of modern life. The challenge is using them wisely — with governance, transparency, and consistent human judgment at the center.

FAQs

1. What is the difference between AI and machine learning?

Artificial intelligence is the broad concept of machines performing tasks that normally require human intelligence. Machine learning is a specific method of achieving AI — one where systems learn from data and improve automatically through experience, without being explicitly reprogrammed. All machine learning is a form of AI, but not all AI uses machine learning.

2. How is machine learning being used in business today?

Machine learning is used across virtually every industry. Common applications include fraud detection in finance, medical image analysis in healthcare, product recommendations in retail, predictive maintenance in manufacturing, customer service chatbots, spam filtering, credit scoring, and marketing personalization. According to McKinsey, 78% of organizations currently use AI in at least one business function.

3. Do you need to know how to code to use AI and machine learning tools?

Not necessarily. No-code platforms like Make.com and Zapier now enable non-technical users to build AI-powered workflows without writing code. Additionally, many AI applications — from smart email filters to AI writing assistants to automated analytics dashboards — are accessible through standard software interfaces. That said, a foundational understanding of how machine learning works will help you use these tools more effectively.

4. What are the biggest risks of AI and machine learning?

The primary risks include algorithmic bias (models reproducing historical inequalities), explainability challenges (the “black box” problem), data privacy vulnerabilities, cybersecurity risks such as prompt injection and model inversion attacks, and over-reliance on automated systems without adequate human oversight. Additionally, the governance gap — where AI is deployed faster than formal policies are created — is a widespread organizational risk in 2026.

5. What is the future of AI and machine learning?

The near-term future of AI and machine learning centers on agentic AI (systems that act autonomously), smaller specialized models, multimodal capabilities, edge AI (intelligence on devices without cloud dependency), and deeper integration of AI into everyday software and workflows. Longer term, physical AI — robotics and systems that can perceive and act in the real world — is expected to be the next major frontier.