🔄 Last Updated: May 1, 2026

Pretexting in cybersecurity is the art of building a believable lie — and in 2026, it has become the single most dominant form of social engineering attack on the planet.

A pretext is a fabricated scenario that an attacker constructs to manipulate a target into handing over sensitive information, authorizing a financial transfer, or granting access they would never approve under normal circumstances. No malware. No zero-day exploits. Just a convincing story delivered by someone who sounds exactly like they belong.

The numbers confirm how effective this has become. Pretexting now accounts for over 50% of all social engineering incidents, according to the Verizon Data Breach Investigations Report 2025 — nearly doubling from prior years and marking the first time pretexting overtook traditional phishing as the dominant social engineering method. It is directly responsible for 27% of all social engineering-based data breaches globally. The human element, which pretexting exploits above all else, appears in 60% of all confirmed breaches in Verizon’s 2025 dataset.

I have worked through real incident reports, threat intelligence data, and breach investigations to build this guide. The pattern that emerges is consistent: pretexting succeeds not because targets are careless, but because attackers invest genuine effort in becoming someone you have every reason to trust.

What Is Pretexting? The Core Definition

Pretexting is a social engineering technique in which an attacker invents a fabricated identity, situation, or story — the “pretext” — to psychologically manipulate a target into taking an action that serves the attacker’s goal. That goal is almost always one of three things: extracting sensitive information, gaining unauthorized system access, or initiating a fraudulent financial transaction.

The term itself predates cybersecurity. Pretexting in the physical world describes the practice of a private investigator posing as a bank employee or government official to extract personal information from a third party. In the digital era, the same psychological manipulation scales to millions of targets through email, phone, SMS, instant messaging, and even video calls powered by AI-generated deepfake identities.

What separates pretexting from standard phishing is the depth of preparation and sustained interaction it requires. A phishing email is a single, automated message sent at scale. A pretexting attack involves research, relationship-building, constructed backstory, and often multiple touchpoints over days or weeks before the attacker makes their actual request. That investment of time and effort is precisely what makes pretexting so convincing — and so difficult to defend against through technical controls alone.

Additionally, pretexting is the engine that drives the most financially devastating cyberattack category in existence: Business Email Compromise (BEC). BEC losses in 2024 reached $6.3 billion according to FBI data cited in the Verizon DBIR — with a median loss per incident of $50,000. Behind every successful BEC attack is a pretext: a fabricated story compelling enough to make an employee authorize a wire transfer, update vendor payment details, or share credential access to critical systems.

How Pretexting Attacks Work: The Psychology Behind the Deception

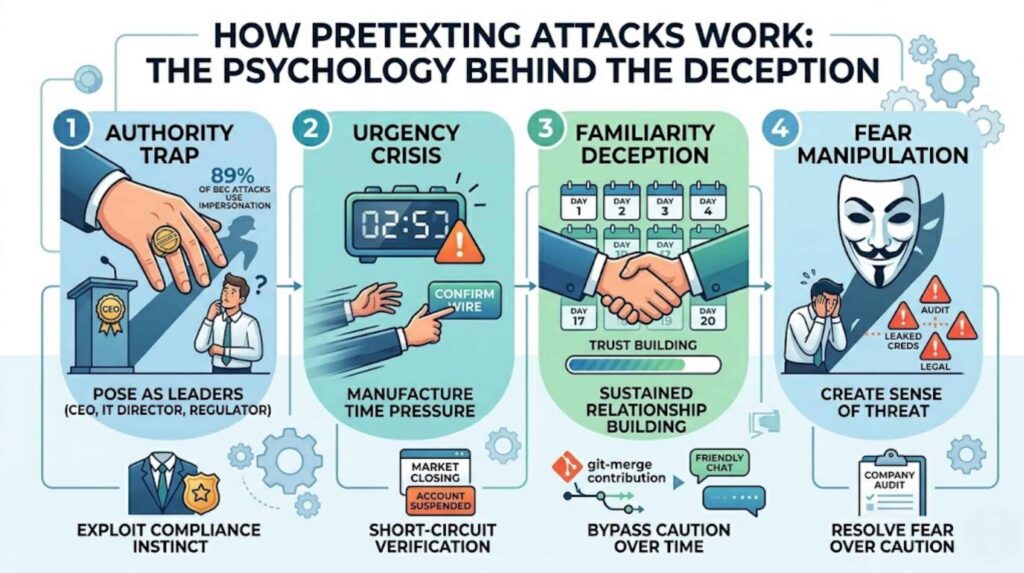

Understanding why pretexting works requires understanding the psychological triggers it reliably exploits. Attackers do not guess at these vulnerabilities — they deliberately engineer situations that activate them.

Authority is the most powerful trigger. People comply with requests from those they perceive as having power over them. An attacker posing as a CEO, an IT security director, a regulator, or a law enforcement officer generates compliance that the same request from a peer would never receive. 89% of BEC attacks involve impersonating leaders such as CEOs or CFOs — because that authority dynamic consistently bypasses critical thinking.

Urgency is the second trigger. When a situation demands immediate action, people skip the verification steps they would otherwise take. “We need this wire transferred before the markets close,” or “Your account will be suspended in two hours unless you verify now” — these manufactured time pressures are not accidental. They are deliberate design choices that short-circuit rational evaluation.

Familiarity builds trust over time. The most sophisticated pretexting attacks, like the Bybit heist of 2025, involve sustained relationship-building before any malicious request is made. In that case, the attacker spent 20 days posing as a trusted software contributor before executing a theft that resulted in $1.5 billion stolen — the largest cryptocurrency theft in history. By the time the attack launched, the target had every reason to trust the attacker implicitly.

Fear drives hasty compliance. A caller claiming your company is under active audit, that your credentials have been leaked, or that legal action is imminent creates a psychological state in which verification feels secondary to resolving the threat. Consequently, targets take actions they would otherwise never authorize, simply to make the source of fear go away.

Common Pretexting Scenarios You Need to Recognize

Pretexting attacks follow recognizable patterns, even though the specific details vary by target. Knowing these scenarios is your first line of recognition.

IT Help Desk Impersonation

An attacker contacts an employee claiming to be from the IT security team. They explain they have detected suspicious activity on the employee’s account and need to verify their identity before resetting access. The employee, believing the call is legitimate, provides their username, a one-time code, or even temporarily grants remote access to their device. The attacker uses that access to move laterally through the organization’s systems.

This is one of the most common enterprise pretexting vectors in 2026. Mandiant’s M-Trends 2026 report documents threat groups like UNC3944 (Scattered Spider) targeting IT help desks specifically, impersonating employees to bypass MFA and gain access to SaaS environments. Once inside, they harvest OAuth tokens and session cookies that persist even after password changes.

CEO or Executive Impersonation

An employee in accounting or finance receives an urgent email — sometimes combined with a follow-up call — from someone claiming to be the CEO or CFO. The message explains that a confidential acquisition is underway and requires an immediate wire transfer to a new vendor account. The request is marked sensitive and asks the employee not to discuss it with colleagues. The employee, intimidated by the executive authority and the confidentiality framing, authorizes the transfer.

UK Finance reported £257.5 million in Authorized Push Payment (APP) fraud losses in H1 2025 alone, up 12% year-over-year. The pretext is almost always a variant of: “Supplier bank details have changed. Payment is urgent.” The weakness that makes it work: no verified call-back process, a single approver, and no timed hold before funds release.

Vendor or Supplier Impersonation

An attacker researches a company’s supplier relationships — often through LinkedIn, the company website, or previously breached data — and then contacts the accounts payable team posing as a representative of a known vendor. They explain that banking details have changed due to an internal restructuring and ask for future payments to be directed to a new account. Without a robust vendor change verification protocol, the fraud succeeds silently over multiple payment cycles before anyone notices.

Government or Regulatory Impersonation

Attackers pose as IRS agents, GDPR compliance officers, or financial regulators conducting mandatory audits. They request sensitive company data, employee information, or system access to “complete the audit.” Fear of regulatory penalty drives compliance. Furthermore, GDPR-themed pretexting — fake data subject access requests designed to exfiltrate personal data — has emerged as a specific EU-targeting technique, exploiting organizations’ legal obligations around data subject access compliance.

Tech Support or Security Researcher Impersonation

An attacker contacts an employee posing as a cybersecurity researcher or vendor representative who has discovered a vulnerability affecting the company. They offer to walk the employee through a “security verification process” that actually installs remote access software or reveals credentials. The 2025 FileFix technique, first spotted by Check Point Research in July 2025, uses exactly this pretext — convincing users they are performing a legitimate security action while actually executing malicious PowerShell commands.

Pretexting vs Phishing: Understanding the Critical Difference

Many people conflate pretexting with phishing. They share the same goal — deception leading to unauthorized access or information disclosure — but they are fundamentally different in execution and defense requirements.

| Factor | Pretexting | Phishing |

|---|---|---|

| Primary channel | Voice, email, chat, in-person | Email (primarily) |

| Preparation required | High — research, identity construction, relationship building | Low — templates, automated sending |

| Scale | Targeted (spear) — individual or small group | Mass or spear variants |

| Duration | Days to weeks of sustained interaction | Single message or short exchange |

| Primary psychological trigger | Authority, familiarity, urgency | Urgency, fear, curiosity |

| Technical filtering effectiveness | Very low — human-to-human interaction | Moderate — email gateways, spam filters |

| Verizon DBIR 2025 share | 50%+ of social engineering incidents | 23% of social engineering incidents |

| Average financial loss | Very high ($50,000+ median BEC loss) | Moderate (credential theft, account takeover) |

The operational implication of this table is significant. Because pretexting involves human-to-human interaction at its core, technical controls — firewalls, spam filters, endpoint detection — provide almost no protection against it. Consequently, process controls and human training are the only reliable defenses.

Understanding how phishing works at a technical level helps you recognize where pretexting begins where phishing ends. They frequently operate as a team: a phishing email establishes initial context (perhaps appearing to be from a trusted vendor), and then a follow-up pretexting call closes the deception with manufactured authority and urgency.

Real-World Pretexting Attacks That Defined 2025 and 2026

Studying real cases builds pattern recognition that no amount of abstract training can replicate.

The Bybit Heist (February 2025) remains the most financially significant pretexting attack on record. The attacker spent 20 days building credibility as a legitimate software contributor within the target’s development environment. By the time the attack executed, the level of established trust was sufficient to compromise a multi-signature cold wallet transaction — resulting in $1.5 billion in cryptocurrency stolen in a single operation.

The Scattered Spider / ShinyHunters Campaign (2025-2026) compromised over 760 organizations using professionalized pretexting kits targeting IT help desks. Operators were paid $500-$1,000 per call using pre-written scripts. Confirmed victims included major enterprises across retail, financial services, and technology. The pretext was always consistent: an employee who had been locked out of their account, urgently requiring a password reset.

The CarGurus Breach (2026) exposed 12.4 million records following a single vishing call that combined impersonation pretexting with credential harvesting. One call yielded SSO credentials sufficient to exfiltrate millions of records — demonstrating how efficiently a well-constructed pretext scales to organizational-level access from a single employee interaction.

These cases share a common thread. The vishing techniques used in all three campaigns were effective not because of technical sophistication, but because the pretexts were researched, rehearsed, and delivered with the confidence of authority.

How AI Is Industrializing Pretexting in 2026

Pretexting was historically limited by the time it required. A human attacker could construct and deliver a few dozen convincing pretexts per day. AI has removed that constraint entirely.

AI-powered tools now generate thousands of contextually relevant, grammatically perfect pretexting messages per hour — personalized using data scraped from LinkedIn, social media, company websites, and breached databases. The same tools simulate conversation in real time, adapting to victim responses mid-interaction. AI voice cloning creates synthetic voices indistinguishable from real colleagues or executives. Deepfake video, now accessible through commercial tools, produces convincing visual identity verification on demand.

The implications are severe. 63% of cybersecurity and IT professionals cited AI-driven social engineering as their top organizational cyber threat in 2026. Meanwhile, AI is simultaneously being deployed in cybersecurity defense — detecting behavioral anomalies, flagging unusual communication patterns, and automating incident response — creating a genuine arms race at the human manipulation layer.

For a deeper understanding of how AI is reshaping the threat landscape beyond pretexting, reviewing AI cybersecurity tools designed for small and mid-sized businesses reveals what AI-assisted defense looks like in practice at every budget level.

How to Defend Against Pretexting Attacks

Because pretexting bypasses technical controls by design, defenses must operate at the process, training, and verification layers. Technical tools play a supporting — not primary — role.

Mandatory Out-of-Band Verification

The single most effective process control against pretexting is out-of-band verification for all high-value requests. Any request involving financial transactions, credential changes, system access grants, or sensitive data disclosure must be verified through a completely independent channel — not the channel through which the request arrived.

If a “CEO” emails requesting an urgent wire transfer, the verification call goes to the CEO’s known direct number, looked up independently, not the number provided in the email. If an “IT security” caller requests a password reset, the employee hangs up and calls the IT department’s official internal number. This single protocol breaks the pretexting chain at the moment it matters most.

Identity Verification Protocols at Every Touchpoint

Establish documented identity verification procedures for anyone requesting sensitive actions. Help desks need written protocols specifying what verification steps are required before any account modification, reset, or access grant. Financial teams need dual-approval requirements for any payment instruction change or new payee addition. These protocols must be written, trained, and tested — not assumed.

Data protection best practices that address data handling also apply directly here: defining who is authorized to request what data, through what channels, with what verification, closes the ambiguity that pretexting exploits.

Security Awareness Training Focused on Social Engineering

Generic security training is insufficient against pretexting. Training must specifically simulate the pretexting scenarios your organization is most likely to face — IT help desk impersonation, executive wire transfer requests, vendor payment update fraud. Role-specific training for finance teams, help desk staff, and executive assistants is particularly high-leverage because these roles are disproportionately targeted.

KnowBe4’s 2025 data across 14.5 million users confirms that consistent security awareness training reduces social engineering susceptibility from 33.1% to 4.1% over 12 months — an 86% reduction. Furthermore, training that includes pretexting simulations specifically — not just phishing simulations — builds recognition of the authority and urgency manipulation patterns that pretexting depends on.

Understanding adjacent attack types deepens this recognition. Learning how baiting attacks work, how phishing emails are structured, and how pharming redirects legitimate traffic builds a comprehensive mental model of social engineering that makes any individual attack easier to recognize — regardless of which specific technique an attacker deploys.

Phishing-Resistant MFA and Session Security

While pretexting often bypasses credential theft entirely by manipulating humans into authorizing access directly, MFA still provides a meaningful secondary control when it is phishing-resistant. FIDO2 hardware keys and passkeys cannot be socially engineered over the phone — they require physical presence. Standard SMS OTP codes and push notifications, however, are frequently targeted by pretexting calls that request real-time authentication codes from victims.

Upgrading your MFA to phishing-resistant methods eliminates the credential-capture component of many hybrid pretexting attacks and forces attackers to construct more complex pretexts that are inherently more detectable.

Threat Intelligence and Pretexting Pattern Monitoring

Active threat intelligence feeds now include pretexting campaign signatures — specific impersonation scripts, targeted industry patterns, and active threat actor tactics. Financial services, healthcare, and technology organizations face disproportionate targeting and benefit most from industry-specific threat intelligence that surfaces the exact pretext patterns attackers are currently using against peers.

Penetration testing that includes social engineering scenarios is the most honest measurement of your organization’s actual pretexting resilience. A professional red team will test your help desk, your finance team, and your executives with the same pretexts real attackers use — and the results reveal precisely where your process controls fail before a real attacker finds out first.

Building broader online safety habits at the individual level and robust website and network protection at the organizational level creates the layered environment where pretexting has the least opportunity to succeed.

For reporting pretexting incidents — particularly those involving BEC or wire fraud — the FBI’s Internet Crime Complaint Center at ic3.gov is the primary reporting channel. Acting quickly after a BEC discovery significantly increases the chance of wire recall. Additionally, CISA’s Social Engineering guidance</a> provides authoritative defensive frameworks covering pretexting, phishing, and vishing that organizations can implement directly into their security programs.

Frequently Asked Questions

What is pretexting in cybersecurity in simple terms?

Pretexting is when an attacker invents a believable fake identity and story to manipulate someone into revealing sensitive information, granting access, or sending money. Unlike phishing, which typically sends a single automated message, pretexting involves a constructed scenario — delivered by phone, email, or in person — that builds trust before making a request. Common examples include someone posing as an IT technician needing your password to “fix a problem,” a CEO urgently requesting a wire transfer, or a fake vendor updating payment details. The pretext makes the request feel legitimate, overriding the target’s natural caution.

How is pretexting different from phishing?

Phishing is a broad, often automated attack delivered primarily via email that sends deceptive messages at scale, hoping a percentage of recipients click a link or reveal credentials. Pretexting is a targeted, research-intensive technique that involves sustained human interaction to build credibility before making a malicious request. Phishing relies on volume; pretexting relies on quality of deception. Importantly, pretexting now accounts for over 50% of all social engineering incidents in 2025 — overtaking phishing in frequency — and drives the most financially costly attack category: Business Email Compromise, which generated $6.3 billion in losses in 2024 alone.

What are the most common pretexting attack examples?

The most common pretexting attacks in 2026 are: IT help desk impersonation, where an attacker poses as internal IT security and requests credentials or remote access to resolve a fake account problem; CEO fraud, where an attacker impersonates an executive and pressures finance teams into urgent wire transfers; vendor payment fraud, where a fake supplier representative requests updates to payment details; government or regulator impersonation, where an attacker creates urgency around a compliance audit; and security researcher impersonation, where an attacker offers to help “fix” a vulnerability while actually installing malware. Each exploits the same psychological triggers: authority, urgency, and familiarity.

How do organizations defend against pretexting attacks?

The most effective defenses against pretexting are process-based, not technical. Mandatory out-of-band verification for any request involving financial transactions, credential changes, or sensitive data disclosure is the single highest-impact control — any request received through one channel must be verified through an independent, pre-established channel before action is taken. Complementing this, organizations need documented identity verification protocols for help desk and finance teams, role-specific security awareness training that simulates pretexting scenarios, and dual-approval requirements for financial authorizations. Regular penetration testing that includes social engineering simulations measures actual resilience against these attacks.

Can AI make pretexting attacks impossible to detect?

AI significantly lowers the detection threshold for pretexting by enabling attackers to generate highly personalized, grammatically flawless pretexts at scale, synthesize convincing voice clones from just seconds of audio, and produce deepfake video identities for real-time interactions. However, detection remains possible through process controls that AI cannot defeat: a mandatory call-back to a known, independently verified number; dual-approval requirements for financial actions; and verification codes pre-established between colleagues cannot be faked by voice cloning alone. The most robust defense against AI-powered pretexting is removing human discretion from high-value authorization decisions and replacing it with documented, auditable verification workflows that the attacker cannot shortcut regardless of how convincing their pretext appears.