🔄 Last Updated: April 29, 2026

Founder & AI Automation Specialist · Upstanding Hackers

Rana Junaid Shahid is a technology specialist and founder of Upstanding Hackers with over 5 years of hands-on experience in AI automation, no-code workflows, and digital infrastructure. He has built and deployed AI-driven pipelines using tools like Make.com, OpenAI, and n8n for businesses across multiple industries. His work focuses on making complex emerging technologies practical and accessible — without requiring a developer background. Junaid covers AI agents for business, automation strategy, digital marketing technology, and Web3 infrastructure.

Introduction: Why AI in Cybersecurity Is No Longer Optional

AI in cybersecurity has moved from a futuristic concept to a daily operational reality. In 2026, it is the most powerful tool defenders have — and simultaneously, the most dangerous weapon attackers are deploying.

The numbers tell the story clearly. Cybercrime losses exceeded $20.8 billion in 2025 alone, according to the FBI’s Internet Crime Complaint Center. Global cybersecurity spending is projected to hit $240 billion in 2026. Meanwhile, organizations that use AI and automation extensively in their security operations save approximately $1.9 million per breach compared to those that do not.

This is not a trend. This is a fundamental shift in how digital security works.

At Upstanding Hackers, our mission has always been to translate complex technology into practical, actionable knowledge. This guide covers everything — from how AI defends your systems to how attackers are weaponizing it against you. Furthermore, we will walk through real tools, real risks, and real strategies you can apply today.

Whether you are a business owner, a cybersecurity professional, or a curious digital citizen, this guide is built for you.

What Is AI in Cybersecurity?

AI in cybersecurity refers to the use of machine learning algorithms, natural language processing, and automation to detect threats, respond to attacks, and protect digital systems — often faster and more accurately than any human team could alone.

Traditional cybersecurity relied on signature-based detection. A threat had to be known before it could be blocked. AI changes that equation entirely. Machine learning models can identify anomalous behavior, detect zero-day exploits, and predict attack patterns before they fully materialize.

Additionally, AI enables security systems to learn continuously. Every new attack feeds the model. Every new data point improves accuracy. This adaptive intelligence is why threat intelligence has become such a critical discipline in modern cybersecurity frameworks.

However, this power is a double-edged sword. The same capabilities that empower defenders are also available to attackers. Understanding both sides is essential.

How AI Is Being Used to Defend Organizations in 2026

AI-Powered Threat Detection and Response

The most significant application of AI in cybersecurity is real-time threat detection. AI-driven Security Operations Centers (SOCs) now process millions of log entries per second, flagging suspicious activity that would take human analysts days to find.

For instance, platforms like CrowdStrike, Palo Alto Networks, and Microsoft Security — all covered in our best cybersecurity companies guide — use AI to correlate events across endpoints, networks, and cloud environments simultaneously.

Two out of three organizations now deploy AI and automation across their SOC environments. The result? A measurable reduction in breach costs averaging $2.2 million per incident. As a result, AI-powered threat detection is no longer a premium add-on. It is the baseline expectation for enterprise security in 2026.

Automated Phishing Detection

Phishing remains the most common attack vector. Consequently, AI has become the primary weapon in fighting it. Machine learning models analyze email headers, sender reputation, URL patterns, and even linguistic tone to flag suspicious messages before they reach users.

At Upstanding Hackers, we have written extensively about building your own no-code defenses. Our guide on how to build an AI phishing detector for your inbox shows you how to set this up without writing a single line of code.

Similarly, our tutorial on automating WhatsApp scam detection with OpenAI and Make.com demonstrates how AI automation can protect communication channels beyond email. Both guides are practical, no-code implementations that any business owner can deploy today.

AI-Driven Malware Scanning and Prevention

Traditional antivirus tools work from known malware signatures. AI-based malware detection uses behavioral analysis to catch previously unseen threats. By watching how a file behaves — what system calls it makes, how it interacts with memory — AI models can identify malware even without a known signature.

Moreover, automated malware scanning can now be embedded directly into cloud storage pipelines. Our step-by-step tutorial on automating malware scanning in Google Drive using Make.com is a perfect example of how no-code AI security tools bring enterprise-grade protection to small businesses.

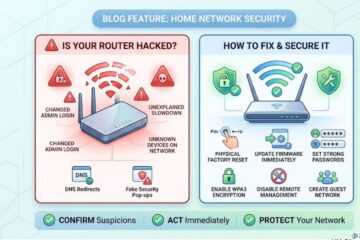

Network Security and Anomaly Detection

AI is transforming network security in cloud computing by moving away from static rule-based firewalls toward dynamic, behavior-based monitoring.

Anomaly detection models learn what “normal” traffic looks like for a specific organization. Subsequently, any deviation — an unusual login time, an unexpected data transfer, a strange API call — triggers an alert. This approach is especially effective against insider threats and advanced persistent threats (APTs) that operate slowly and deliberately to avoid detection.

Furthermore, AI enables predictive network security. Instead of reacting to breaches after they happen, AI models can forecast which vulnerabilities are most likely to be exploited — allowing security teams to prioritize patches before attackers strike.

The Dark Side: How Attackers Are Using AI

AI-Generated Phishing Attacks

The same AI models that help defenders detect phishing are helping attackers craft it. AI-generated phishing emails are now hyper-personalized, grammatically perfect, and contextually aware.

According to recent data, phishing attacks targeting financial institutions have surged 1,265% since 2022. AI-generated emails show significantly higher engagement rates than traditional phishing. This is why learning how to spot phishing emails remains one of the most critical digital skills anyone can develop.

Additionally, attackers are now combining phishing with deepfake technology. AI-generated voice and video clones of executives are being used in Business Email Compromise (BEC) scams. Our deep-dive on detecting deepfake audio in real time covers exactly how to defend against this emerging threat.

AI-Powered Social Engineering

social engineering attacks has always been the most effective attack vector because it targets humans, not systems. AI makes it dramatically more dangerous. Attackers now use AI to analyze a target’s social media presence, professional history, and communication style — then craft manipulation campaigns tailored specifically to that individual.

Baiting attacks and pharming attacks have both evolved significantly in the AI era. Consequently, human awareness training must evolve alongside the technology.

Prompt Injection and AI-Native Attacks

One of the newest threats in the AI cybersecurity for small business landscape is prompt injection — an attack that manipulates AI systems by feeding them malicious instructions disguised as legitimate input.

This attack vector is particularly dangerous because it exploits the AI itself as the entry point. Our technical guide on securing AI chatbots against prompt injection explains the mechanics and mitigation strategies in detail. Notably, prompt injection holds the number-one spot on the OWASP LLM Top 10 security risks list for 2026.

As organizations deploy more AI-powered tools — chatbots, automated workflows, AI agents — the attack surface for prompt injection grows accordingly.

Key Statistics: AI in Cybersecurity 2026

These figures come from the most recent industry reports and paint a clear picture of the current landscape:

- 94% of security leaders identify AI as the top force reshaping cyber risk in 2026 (World Economic Forum)

- 77% of organizations now run generative AI in their security stack

- 73% of security professionals say AI-powered threats are already hitting their organizations

- 87% of security leaders say AI is significantly increasing the volume of threats that require attention

- 92% are concerned about the security implications of AI agents across their workforce

- $1.9 million average savings per breach for organizations using AI security tools extensively

- $240 billion projected global cybersecurity spending in 2026 (Gartner)

- The global AI security market is projected to grow from $24.3 billion in 2024 to $133.8 billion by 2030

These numbers reinforce one central truth: AI in cybersecurity is not a future investment. It is a present-day necessity.

AI Cybersecurity Tools and Technologies in 2026

Extended Detection and Response (XDR)

XDR platforms use AI to unify threat detection across endpoints, networks, cloud environments, and email — correlating signals from all sources into a single coherent picture. This cross-domain visibility is exactly why 93% of organizations now prefer platform-based security purchases over point solutions.

Security Information and Event Management (SIEM) with AI

Modern SIEM platforms have evolved far beyond log aggregation. AI-powered SIEM tools now provide real-time behavioral analytics, automated incident response, and predictive threat modeling. They are the backbone of AI-driven SOC operations.

AI-Powered Identity and Access Management (IAM)

One of the fastest-growing applications of AI in cybersecurity is identity management. As our related content on agentic AI with Pindrop and Anonybit explores, AI is now being used to verify identity through behavioral biometrics, voice patterns, and continuous risk scoring.

This is especially important because credential abuse remains among the top attack vectors in 2026. Static passwords and even standard multi-factor authentication (MFA) are no longer sufficient against AI-generated deepfake attacks.

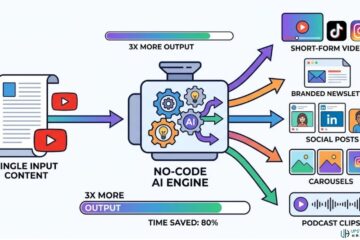

No-Code AI Security Automation

One of the most exciting developments is the democratization of AI security through no-code platforms. Tools like Make.com and Zapier allow small businesses and individual operators to build sophisticated security workflows without writing a single line of code.

Our guide on low-cost AI agents for small business workflows shows exactly how to leverage these tools for automated threat monitoring, incident logging, and security alerting. Furthermore, our tutorial on stopping AI from reading your Gmail demonstrates practical privacy protection steps that any user can implement immediately.

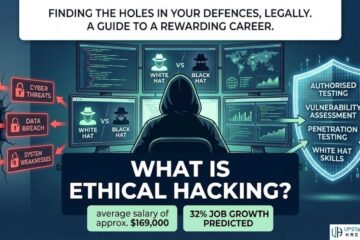

AI and Cybersecurity Careers: What the Shift Means for Professionals

The Skills That Matter Most Now

AI is automating Level 1 and Level 2 SOC tasks efficiently — log analysis, routine alert triage, pattern matching, and compliance reporting. However, AI cannot replace judgment under pressure, adversarial thinking, or governance design.

According to ISACA’s 2025 data, the most common skills gaps in new security graduates are not technical at all. They are critical thinking (57%), communication (56%), and problem-solving (47%). Meanwhile, the most in-demand roles are shifting toward AI governance, LLM security, and adversarial machine learning defense.

If you are building a career in cybersecurity, understanding penetration testing types and how to become a hacker ethically remain foundational skills. However, layering AI knowledge on top of that foundation is what will differentiate professionals in the years ahead.

Learning AI for Cybersecurity

If you are new to the field, our comprehensive guide on how a beginner can start learning AI provides a clear, structured roadmap. Similarly, our piece on AI augmenting humans explores how to think about the human-AI collaboration model that defines modern security work.

For a broader context, our analysis of whether AI can fully replace cybersecurity functions provides an honest, evidence-based answer.

Zero Trust Architecture: AI’s Perfect Partner

zero trust security is the security philosophy that assumes no user or device should be trusted by default — even those already inside the network perimeter. AI makes Zero Trust infinitely more practical by automating the continuous verification it requires.

Every access request is scored in real time. Every behavioral deviation is flagged. Every identity is re-verified contextually. Gartner projects that organizations adopting Continuous Exposure Management (CEM) — a Zero Trust-adjacent framework — will be three times less likely to experience a breach by 2026.

This represents a fundamental departure from perimeter-based security models. Consequently, organizations that have not yet begun their Zero Trust journey are operating with dangerously outdated assumptions.

The Ethics and Governance of AI in Cybersecurity

The Governance Gap

Perhaps the most alarming statistic in the AI cybersecurity landscape is this: 77% of organizations now run generative AI in their security stack, but only 37% have a formal AI policy.

This governance gap is dangerous. Poorly implemented AI can introduce new risks — misconfiguration, biased decision-making, over-reliance on automation, and susceptibility to adversarial manipulation. As the World Economic Forum’s Global Cybersecurity Outlook 2026 notes, AI can improve cybersecurity, but only when deployed within sound governance frameworks that keep human judgment at the center.

Privacy Implications

AI-powered security tools collect enormous amounts of behavioral data. This creates privacy considerations that organizations must address proactively. Understanding how AI reads and processes your data is not just a technical question — it is a legal and ethical one.

Organizations operating in regulated industries must also navigate a growing patchwork of AI-specific compliance requirements. Regulatory compliance deadlines in 2026 are not optional for organizations using AI in sensitive contexts.

The “AI-Washing” Problem

Not every product with an AI label is genuinely AI-powered. The distance between marketing copy and actual capability is real. When evaluating security tools, organizations need to interrogate what is actually running under the hood — not just what the branding promises.

Building Your AI-Powered Security Strategy

Step 1: Audit Your AI Inventory

List every AI tool in use across your organization. Include tools employees may be using without formal approval — the so-called “shadow AI” problem. You cannot secure what you cannot see.

Step 2: Map Your Actual Attack Surface

Traditional attack surface mapping focused on network ports and endpoints. In 2026, your attack surface includes every AI model, every API connection, every automated workflow, and every LLM integration. Add AI-specific penetration testing types to your next red-team exercise. The OWASP LLM Top 10 is a practical starting framework.

Step 3: Update Your Identity Verification Stack

Standard MFA is no longer sufficient against AI-generated deepfakes. Explore behavioral biometrics, adaptive authentication, and continuous identity verification tools. Our application hosting guide touches on how identity management connects to infrastructure security decisions.

Step 4: Implement No-Code Automation Where Possible

You do not need a six-figure security engineering team to benefit from AI automation. No-code platforms make powerful security workflows accessible to organizations of every size. Start with automated phishing detection, malware scanning, and suspicious activity alerting.

Step 5: Invest in Human Judgment

AI handles the speed. Humans provide the judgment. The organizations thriving in this landscape are investing in both — deploying AI for scale and training humans for adversarial thinking, governance design, and incident command. Explore legal ways to build hacking and security skills as part of your professional development.

Frequently Asked Questions (FAQs)

1. What is AI in cybersecurity and how does it work?

AI in cybersecurity uses machine learning, behavioral analysis, and automation to detect threats, prevent attacks, and respond to incidents — often in real time and at a scale no human team could match. Instead of relying solely on known threat signatures, AI models analyze patterns, identify anomalies, and learn continuously from new data to stay ahead of evolving attack techniques.

2. Can AI completely replace human cybersecurity professionals?

No. AI automates high-volume, repetitive tasks like log analysis, alert triage, and pattern matching effectively. However, it cannot replace human judgment in complex investigations, governance decisions, adversarial thinking, or incident command. The most effective security programs in 2026 combine AI’s processing speed with human expertise and critical thinking.

3. How are cybercriminals using AI to attack organizations?

Attackers use AI to craft hyper-personalized phishing emails, generate deepfake audio and video for social engineering, automate vulnerability scanning, and execute prompt injection attacks against AI-powered business tools. AI has dramatically lowered the barrier to entry for sophisticated attacks, making threats more frequent, more targeted, and harder to detect with traditional methods.

4. What are the biggest AI cybersecurity risks for businesses in 2026?

The top risks include AI-generated phishing and deepfake social engineering, prompt injection attacks against LLM-powered applications, data leaks through generative AI tool usage (reported by 68% of organizations), shadow AI usage without formal security policies, and supply chain vulnerabilities in third-party AI integrations.

5. How can small businesses use AI for cybersecurity without a big budget?

Small businesses can leverage no-code AI automation platforms like Make.com and Zapier to build AI-powered security workflows at minimal cost. Start with automated phishing detection for email, malware scanning for cloud storage, and suspicious activity alerts for key accounts. Our guides on AI phishing detection and low-cost AI agents provide step-by-step instructions anyone can follow.

Final Thoughts: The Organizations That Will Survive

AI in cybersecurity has fundamentally changed the rules of digital defense. The organizations that thrive are not the ones with the biggest security budgets. They are the ones building adaptive programs — combining AI-powered detection with human judgment, governance frameworks, and continuous learning.

The threat landscape is moving at machine speed. Your defense needs to keep pace.

At Upstanding Hackers, we believe you should not need a computer science degree to protect your business or your personal data. Our cybersecurity content hub is built to give you the practical, tested knowledge you need to operate safely in this rapidly evolving landscape.

Explore our AI and Automation category for no-code security tools. Read our technology reviews for honest evaluations of security software. And if you have questions, reach out directly through our contact page.

Your digital security starts with knowledge. You are already in the right place.